AI governance frameworks are expanding rapidly — but they are solving the wrong problem.

Most organizations are getting better at managing models, data, and controls.

But when an AI-driven decision causes harm, a simpler question emerges:

Who owns that decision?

In many cases, there is no clear answer.

Governance Has Scaled — Accountability Has Not

Over the past year, AI governance has moved from a technical concern to a board-level risk issue.

Regulators are accelerating expectations.

Organizations are responding with:

- Model risk frameworks

- Data governance controls

- Oversight committees

- Audit and monitoring processes

On the surface, governance maturity is improving.

But these structures are largely built around systems — not decisions.

They answer questions like:

- Is the model performing as expected?

- Is the data governed appropriately?

- Are controls operating effectively?

They do not answer:

- Who is accountable for the decisions AI influences?

- Where in the lifecycle that accountability is defined?

- What happens when those decisions lead to unintended outcomes?

This breakdown is often a continuation of a deeper issue: governance is introduced too late to shape meaningful outcomes — a pattern explored in AI Governance Is Failing at the Design Phase — Not the Review Phase.

The Missing Layer: Decision Accountability

Most AI governance models are designed around system accountability.

They focus on how models are built, validated, deployed, and monitored.

But AI systems do not exist in isolation — they exist to inform or automate decisions.

And it is those decisions — not the models themselves — that create real-world impact.

Yet in many organizations:

- Model owners are not decision owners

- Product teams define use cases, but not accountability boundaries

- Risk and compliance functions review controls, but do not own outcomes

- Business units rely on outputs, but do not fully own the decision logic

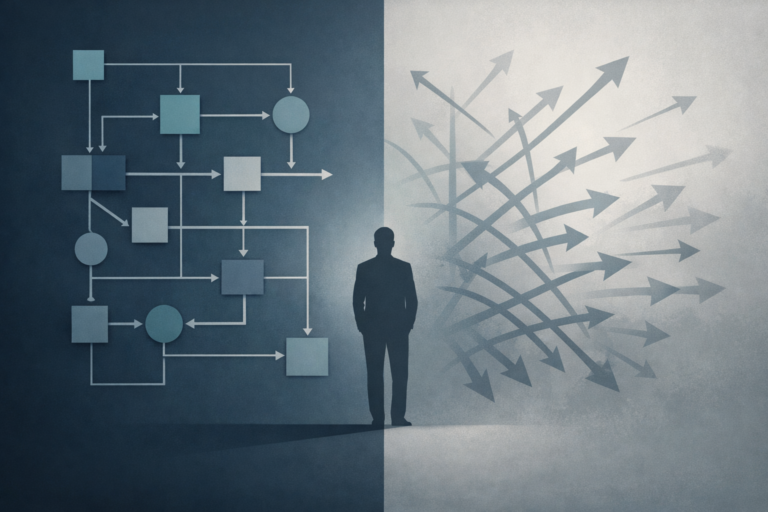

The result is a structural gap:

AI influences decisions. Decisions create impact. But accountability is fragmented.

This is similar to the broader governance confusion where controls are mistaken for true oversight — a distinction discussed in Guardrails Are Not Governance — Why Most AI Deployments Still Fail at the Basics.

The Accountability Gap

This creates what can be described as an accountability gap in AI decisions.

It doesn’t appear during normal operations.

It becomes visible only under pressure:

- When an AI-driven decision causes financial loss

- When a customer outcome is challenged

- When regulators ask for traceability

- When audit requires end-to-end accountability

At that point, organizations often discover:

- Ownership is distributed across teams

- Decision authority was never formally defined

- Escalation paths are unclear or inconsistent

- Accountability cannot be traced cleanly from design to outcome

The system is governed.

The decision is not.

And without a clearly defined human oversight layer — not just for monitoring, but for intervention and accountability — this gap persists, as explored in The Human Oversight Layer: Why AI Governance Needs More Than Just Automation.

Why This Gap Exists

This gap is not accidental — it is structural.

AI governance is typically introduced at review stages:

- Model validation

- Risk assessment

- Compliance checkpoints

By then, key decisions have already been made:

- What the system is designed to do

- How much autonomy it has

- Where human oversight is expected

- What level of risk is implicitly accepted

These are not technical decisions.

They are decision architecture choices.

And in most organizations, they are made before governance formally engages.

As a result, accountability is never explicitly designed into the system.

It is assumed, distributed, or deferred.

Why Regulation Is Exposing the Problem

Regulatory pressure is increasing — but not in the way many organizations expect.

Global frameworks such as the National Institute of Standards and Technology AI Risk Management Framework and emerging regulations like the EU AI Act are expanding expectations around AI oversight.

But regulators are not only asking:

- Do you have a governance framework?

- Are your models validated?

They are increasingly asking:

- Who approved this decision structure?

- Who is accountable when outcomes deviate?

- Can you trace responsibility from design through deployment and incident response?

These are not control questions.

They are accountability questions.

And most organizations are not prepared to answer them clearly.

The Real Governance Question

AI governance has been framed as a control problem.

It is not.

It is a decision accountability problem.

The critical question for leadership is no longer:

“Is the model governed?”

It is:

“Is the decision accountable?”

Until that question is answered — explicitly, early, and structurally — governance will continue to expand in scope without resolving the underlying risk.

Closing Thought

AI does not eliminate accountability.

It redistributes it.

If organizations do not define where that accountability sits — and when it is established — they are not reducing risk.

They are scaling it.